TLDR; Pickle is slow, cPickle is faster, JSON is surprisingly fast, Marshal is fastest, but when using PyPy all fall before a humble text file parser.

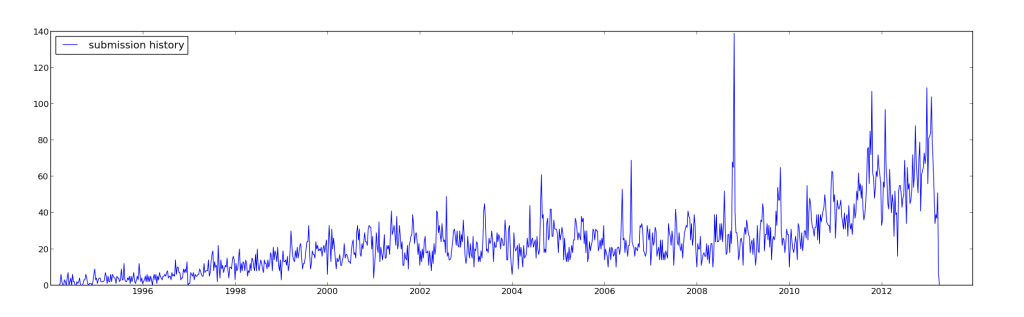

I’ve been working on the Yandex Personalized Web Search challenge on kaggle, which requires me to read in a large amount of data stored as multi-line, tab-separated, records. The data is ragged, each record has sub-fields of variable length, and there are 34.5 million of them. There are too many records to load in memory, and starting out, I’m not quite sure what features will end up being important. To reduce the bottle-neck of reading the files from disk, I wanted to pre-process the data, and store it in some format which afforded me faster reading and writing off the disk, without going to a full blown database solution. I ended up testing the following formats:

- pickle (using both the pickle and cPickle modules), a module which can serialize just about any datas structure, but is only available for python. I’ve used this extensively to persistify derived data for work.

- JSON, which only supports a basic set of data-types (fine for this use case), but can be handled by a larger number of languages (irrelevant for this use case).

- Marshal, which is not really meant for general serialization, but I was curious about it’s internals. This is not really a recommended format, the documentation clearly warns “Details of the format are undocumented on purpose; it may change between Python versions.”